lol. has anyone found ways to optimize starfield for their pc, like reducing stuttering, FPS drops, etc?

I’ll believe they actually optimized their PC port when their PC ports start showing some signs of effort at being actual PC ports

No FOV slider after FO76 had one is, to me, a great sign of how little Bethesda actually cares about the platform that keeps them popular (thanks to mods)

They don’t want to put the work in for the biggest benefit of PC gaming.

I don’t think any PC should be able to run a game well at max settings on release. Leave room for it to still look good five years from now.

And have the bare bones setting be able to work on shitty systems that do need upgraded.

Bethesda just wants to make games that run on a very small slice of PCs, and who can blame them when they can’t even release a stable game on a single console? They’re just not good at

I don’t think any PC should be able to run a game well at max settings on release. Leave room for it to still look good five years from now.

This is the mentality they want you to have. And it’s a shit one. PCs should be able to run any game well when it comes out.

at max settings

Waaaaay too many people want that endorphin hit from setting everything to ultra.

Even if Medium looked exactly the same.

I’m gonna make a game where the graphics are basically the same at all presets, but with different filters. Crank the bloom and add a sepia filter, call it pro max or something.

“But this one goes to eleven”

The industry kind of did it to themselves. We had a really long period what 1080p was the default resolution and games really didn’t even try to push graphics at all. Things kind of plateaued post-Crysis for about 10 years before I even felt like we had a game that looked significantly better than it did.

So a lot of people have gotten used to being able to hit ultra settings day 1 because their entire gaming life that’s been possible.

On a 10 year old potato

If you’ve got a 5 year old pc, sorry you shouldn’t expect to play on max, let alone anything over medium.

People need to temper their expectations about what a PC can actually do over time.

We are talking modern hardware, nobody is expecting a 5 year old PC to be running maxed out games anywhere near as well as the latest hardware should be. People are just more and more willing to bend over and accept shit given to them, there’s no reason Starfield couldn’t be running better, they certainly had the capabilities at Bethesda to make it so.

Read the comment chain again, because you missed the persons original point….

They talk about old and modern hardware, you can’t just ignore half their point.

I think you are imagining modern hardware to just be like a 4090. Any modern hardware here meaning current generation GPU/CPUs. They should be able to run at max settings yes. The performance match ups of low to mid range hardware of this generation overlaps with mid to high of the last generation (and so on), so just talking years here doesn’t really translate.

People holding on to their 1080tis obviously shouldn’t be expecting max settings, but people with 3080s, 4070s, 6800XTs (even 2080ti/3070s), should absolutely be able to play on max settings. Especially games like Starfield that are not exactly cutting edge, there’s a lot older games that had a lot of work put into performance from the start and they look and run a lot better than this.

I have an i9 9900k and a 4070ti and can play it butter smooth max settings in 4k 100% rendering. The CPU is definitely starting to show its age, but I haven’t had any complaints about starfields performance.

That said I can’t fucking stand consoles. I get that companies would be stupid to not sell something to as many people as possible, but I’m so sick and tired of seeing good games handicapped because they need to run on a rotten potato Xbox from 10 years ago or whatever…

Remember when “could it run crysis” was a good thing to understand? Now everyone acts like max settings should run on 5 year old gpus and complaining about devs instead.

We’re on PCs guys, there’s a shit load of variables as to why a game might run poorly on your device, there is absolutely no way a game dev can (or should) be able to account for all those variables. If you want a standard gaming experience with preset settings that run fine, get a console.

“Could it run Crysis” was a pro for your computer, but it was also always a bit of a dog on the fact that the game was largely unplayable for a lot of people.

It was because it pushed the boundaries on what was possible with current gen hardware at the time, that didn’t make it unoptimized or a bad game, but that concept seems to be lost on a lot of people.

Are you seriously suggesting that Starfield pushes any boundary? The game still uses the god forsaken “boxes” from Oblivion, every slice of world pales in comparcomparison to both the size and quality of like, all modern open worlds of comparable budget.

deleted by creator

There literally are settings lmfao

How would you expect them to develop a game targeted towards hardware they can’t test on due to it not existing? Latest PC hardware should be able to run max or near max at release.

I don’t think you understand how all those settings work in the option menu…

Or anything that I said in my comment.

It’s been explained to other people already

People would rather be angry than know how things work.

Yeah. But at least you can use console command ( ~ tilde as usual ) to change fov Default values are first person 85 and third person 70 Range is 70-120

SetINISetting "fFPWorldFOV:Camera" "85" SetINISetting "fTPWorldFOV:Camera" "70"When you’re happy with what you got, issue

SaveIniFilesOr you can just edit

The game, without using dlss mods, runs at 30fps with some stutters on my system using the optimized settings from hardware unboxed (linked) on a 4060ti. If I install the DLSS-FG mods I immediately get 60-90 fps in all areas.

Heres the rub, Im not a FPS whore. Its generally a good experience for this game at 30 FPS assuming you use a gamepad/xbox controller. KB+M it gets really jittery and theres input lag. The game was clearly playtested only using a gamepad. The reactivity of a mouse for looking is much different and the lower FPS the game is optimized for becomes harder to digest.

I have also tested on my 1650ti max-q, and a 1070. Both the 4060ti and 1070 were on an egpu.

My system has an 11th gen i7-1165g7 and 16 gb ddr4 ram. I play at 1080P in all cases.

For the 1650ti and the 1070 the game runs fine IF I do the following

- i set the preset to Low (expected) and THEN turn scaling back up to maximum/100% and/or disable FSR entirely

- set indirect shadows to something above medium (which allows the textures to run normally, otherwise they are blurry).

Even on the 4060ti, it saying its using 100% of the GPU but it is only pulling like 75-100 W, when it would normally pull 150-200W under load easy.

TL:DR - this game isnt optimized, at least for NVIDIA cards. They should acknowledge that.

I can at least change the FOV with an ini file edit, but there’s no way to adjust the horrible 2.4 gamma lighting curve they have… It’s so washed out on my display, it’s crazy!

There is actually https://www.nexusmods.com/starfield/mods/281

I’m glad everyone is beta testing this game for me.

I’ll wait until it’s $20 on steam with all DLC for spaceship horse armor.Welp. I just found my alt account, or at least long lost twin.

Thank the god emperor I wasn’t vestigial.

I tried to reabsorb you, but chaos knights…

Same but I’m going to wait until it’s $5. Can’t give Todd too much money now.

Might as well pirate it at this point

I did that with Skyrim and regretted it.

Yea? How so?

I use Steam achievements as a gauge of how much of my library I’ve actually played. I bought Skyrim after I had played it and now it’s sitting in my library with barely any achievements completed even though I’ve already played through the entire game once. Just kind of soured the whole thing for me and I should have been patient.

Might as well wait for the 5 year anniversary edition with paid mod content included

lol same.

80 fucking euro for the quality of that game is crazy.3€ Titanfall 2 Ultimate.

Yup. Having it again on Steam was worth it.I’m waiting at least 6 months to even consider playing this game. More likely over a year.

Yeah my SO and I are too busy with bg3, maybe by the time we get to it it’ll have a coop mod ¯\_(ツ)_/¯

I guess Steve from gamer nexus should upgrade his computer when doing test runs? This guy I swear.

Just get a 4090ti to play the game that looks like it’s made in 2011. What’s the hold up?

Already preordered a 7900XTXTX ready to run Starfield at 720p 144fps in 3 years.

Well I pre preordered my xfx rx 7900 xtxtxtxtxtxtxtxtxtxtx

It runs great on my 4080. Of course everything does, it’s a nice card. Also puts off way less heat than my old 3090

such a todd move, always with a shit eating grin. “Oh you can’t run our game? jeez, have you tried not being poor?”

Thing is, I did upgrade my PC. Starfield runs acceptably, but not to the level it should given my hardware.

I’d much rather hear that they’re working on it in a patch rather than be gaslit into thinking it already runs well.

I would agree. They should acknowlege its not well optimized and are working on fixing it, especially with Nvidia cards. It rubs me wrong that they are in denial here, especially given their rocky release history.

Heck that think that 50% of the reason they didnt want even co-op or any netcode. FO76 was a nightmare on relase largely because of that.

While I’m probably in a small demographic here, I’m sitting with a perfectly capable PC, not a gaming supercomputer or anything but a healthy upgrade from standard, and when I started hearing about Starfield I got really excited.

…then I saw all this stuff about compatibility and performance, and when I tried to see where my equipment stood, I was left with the impression that if I wasn’t a PC building enthusiast, it was going to be hard to figure it out, and if my computer wasn’t pretty high end and new, it was probably going to struggle.

And now hearing more about the performance issues, I’ve basically given up even considering the game based on possible performance and compatibility issues.

I’m playing it in a 9th Gen i5 and a 1060ti. Runs fine.

What do you play on. The reality it’s pretty serviceable and it is one of those games where FPS != performance or experience.

I’ve played most of the game at 27-35 fps. It’s been mostly fine as long as I’m not obsessing about the fps counter. I frankly just turn it off unless something bad starts happening.

I’ve even figured out ways to get it mostly at 30fps on a pretty low power card.

At those settings, you might be seeing a consistent 30fps. You tend to get used to that if that’s what you see all the time.

What people in somewhat higher tier hardware are seeing is an average >60fps, but with sudden dips down below 35fps. That inconsistency is very noticeable as stutters. This seems to happen even all the way up to an rtx4090 running at 1080p. The game seems to hit some bad CPU bottlenecks, and no CPU on the market can fix it.

No doubt I agree. I can definately get the game to do similar with KB+M. The response time, sensitivity and precision of a mouse for camera movements is much faster and more accurate than a game pad.

Honestly one of the biggest things i did was just use a controller. It smoothed the game out quite a bit. Its then using motion blur (slightly) and the stick acceleration to smooth out the frametime and the input delays make it much less noticeable. Honestly ive become convinced thats the primary way Bethesda tests their games. I started doing this with Fallout 76 for the exact same reasons.

Those sudden movements seem to cause the system to have to render or re-render and re-calculate parts of the world faster with a KB+M. THus the dips and stutters become more noticeable.

Im not excusing Bethesda here. I think its bullshit. I think they should optimize their code. At the least they should goddam acknowledge the issue and not try and act like this is normal. Its not. Im also merely trying to portray a way that you can play and enjoy this game without totally raging out in frustration because Bethesda cant really do their job, assuming this is a title you wanted to see and enjoy and are willing to “support” a company with such a rich history of putting out products like this. Its not really new. Skyrim on PC has CTD issues on release, Fallout New Vegas did too, along with a crash once ram usage exceeded 4GB because of the 32-bit barrier. Modders had to fix that shit first. Heck fallout 4 was heralded as a success because it at least was playable day 1.

27-35fps is low enough for me to wait 5-10 years and brute force it with future hardware, if it’s on sale cheap enough. It shouldn’t run this badly for how little it impresses graphically.

Thats what I am saying though. If you dont have an FPS counter up, the way this game runs at 27-35 is still smoother than 60-75 on other titles (ie: RDR2).

I mean, you do you, but the raw FPS numbers arent necessarily accurate depictions of how the game runs. Its like they fuck with frametimes and the like. Which makes sense considering if you unlocked or removed vsync from old titles physics would get wacky, lock pick minigames were super fast etc etc.

That said, i have noticed with a number of Bethesda releases that certain aspects run smoother on a controller. Fallout4/3/NV i was able to brute force performance to run fine on KB+M in 90% of areas. It would still get choppy downtown etc. It was when 76 came out that I tried playing just on a controller. Something about the stick acceleration when moving the camera was much smoother, it made the overall experience better. The same applies here. As soon as I just moved to a controller, its really pretty enjoyable. It doesnt seem to be a “fast twitch” style game like say…CS:GO, Battlefield etc are.

Though I would totally understand if some folks arent going to make such concessions. Just seems to me Bethesda is one of those studios that really only playtests/develops for controller based play despite “supporting” alternative inputs.

FWIW I already have a controller for other games (like Elite: Dangerous or other flight games). So its no biggie for me to change up.

This seems to be the new normal though unfortunately.

It’s normal that 5-10 percent generational improvement on a product (6800 XT - > 7800 XT) is celebrated and seen as good product, when in reality it’s just a little less audacious than what we had to deal with before and with Nvidia.

It’s normal that publishers and game studios don’t care about how their game runs, even on console things aren’t 100 percent smooth or often the internal rendering resolution is laughably bad.

The entire state of gaming at the moment is in a really bad place, and it’s just title after title after title that runs like garbage.

I hope it’ll eventually stabilize, but I m not willing to spend 1000s every year just to be able to barely play a stuttery game with 60 fps on 1440p. Money doesn’t grow on trees, which AAA game studios certainly seem to think so.

Yes GI and baked in RT / PT is expensive, but there need to be alternatives for people with less powerful hardware, at least until accelerators have become so powerful and are common in lower end cards to make it a non issue.

The Nvidia issue might be more Nvidia’s fault. All three major GPU companies (including Intel now) work with major publishers on making games work well with their products. This time around, though, Nvidia has been focused on AI workloads in the datacenter–this is why their stock has been skyrocketing while their gaming cards lower than a 4080 (i.e., the cards people can actually afford) have been a joke. Nvidia didn’t work much with Bethesda on this one, and now we see the result.

Nvidia is destroying what’s left of their reputation with gamers to get on the AI hype train.

Not entirely true.

Starfield was amd exclusive, so Intel didn’t get the game (or support it) until early access.

Nvidia probably didn’t get as much time to optimize the game or work with Bethesda as they normally would.

Nvidia didn’t work much with Bethesda on this one, and now we see the result.

Is there a source to this? Arguably the game is an AMD exclusive, which is a deal Beth/MS made, so it sounds like maybe they werent the inclusive ones in that relationship.

They didn’t optimize it for consoles either. Series X has equivalent of 3060 RTX graphical grunt, yet it’s capped to 30fps and looks worse than most other AAA games that have variable framerates up to 120fps. Todd says they went for fidelity. Has he played any recent titles? The game looks like crap compared to many games from past few years, and requires more power.

The real reason behind everything is the shit they call Creation Engine. An outdated hot mess of an engine that’s technically behind pretty much everything the competition is using. It’s beyond me why they’ve not scrapped it - it should have been done after FO4 already.

Weird how everyone jokes how shitty Bethesda developers are but everyone’s surprised how bad Starfield runs.

And don’t forget the constant loading screens. A game that has so many of them shouldn’t look this bad and run this poorly.

Look, I agree with everything from the first paragraph, and the CE does seem to have a lot of technical debt that’s particularly shown in Starfield, which is trying to do something different than the other games. The engine being “old” is bad though (I know you didn’t make it, but it’s often said), and it being “technically behind” other engines is really true in all ways.

The Creation Engine had been adapted by Bethesda to be very good at making Bethesda games. They know the tools and the quirks, and they can modify it to make it do what they want. It has been continuously added onto, just as Unreal Engine has been continuously added onto since 1995. The number after the engine name doesn’t mean anything besides where they decided to mark a major version change, which may or may not include refactoring and things like that. I have a guess that CE2 (Starfield’s engine) is only called CE2 because people on the internet keep saying the engine is old, but tell them to use UE (which is approximately the same age as Gamebryo) but adds numbers to the end.

which is trying to do something different than the other games.

The other games from Bethesda, right?

Yeah, I meant Bethesda games, but it’s different from what most games are trying to do. The exception being Elite Dangerous and Star Citizen (which the Kickstarter was more than 10 years ago at this point…).

And Empyrion.

Correct me if Im wrong but dont they limit frametimes so they can reduce tv stuttering? NTSC standard for TVs is 29.94 or 59.94 fps. I assume they chose the 30fps so it can be used more widely and if its scaled to 60 it would just increase frametime lag. Again, im not sure.

Also, comparing CE2 to CE1 is like comparing UE5 to UE4. Also, i dont remember but doesnt starfield use the havok engine for animations?

Edit: rather than downvote just tell me where I am wrong

Not to put too fine of a point in it but you’re wrong because your understanding of frame generation and displays is slightly flawed.

Firstly most people’s displays, whether it be a TV or a monitor, are at least minimally capable of 60hz which it seems you correctly assumed. With that said most TVs and monitors aren’t capable of what’s called variable refresh rate. VRR allows the display to match however many frames your graphics card is able to put out instead of the graphics card having to match your display’s refresh rate. This eliminates screen tearing and allows you to get the best frame times at your disposal as the frame is generally created and then immediately displayed.

The part you might be mistaken about from my understanding is the frame time lag. Frame time is an inverse of FPS. The more frames generated per second the less time in between the frames. Now under circumstances where there is no VRR and the frame rate does not align with a displays native rate there can be frame misalignment. This occurs when the monitor is expecting a frame that is not yet ready. It’ll use the previous frame or part of it until a new frame becomes available to be displayed. This can result in screen tearing or stuttering and yes in some cases this can add additional delay in between frames. In general though a >30 FPS framerate will feel smoother on a 60hz display than a locked 30 FPS because you’re guaranteed to have every frame displayed twice.

Thanks, i was recently reading about monitor interlacing and i must have jumbled it all up.

Todd said they capped it at 30 for fidelity (= high quality settings). Series X supports variable refresh rate if your TV can utilize it (no tearing). Series X chooses applicable refresh rate which you can also override. All TVs support 60, many 120, and VRR is gaining traction, too.

Let’s take Remnant II, it has setting for quality (30) balanced (60) and uncapped - pick what you like.

CE is still CE, all the same floaty npc, hitting through walls, bad utilisation of hardware have been there for ages. They can’t fix it, so it’s likely tech debt. They need to start fresh or jump to an already working modern engine.

That’s for movies, I don’t remember why, but films can be fine in 30fps. Games are kinda horrible at 30fps, all TVs I know have 60Hz or higher refresh rate for all PC signals

iIRC it’s just because we’re used to the lower framerate in movies. If you look up some 60 FPS videos on YouTube you’ll notice how much smoother it looks.

Personally, I’d wish sports broadcasts would be in 60 FPS by default. Often the action is so fast that 30 FPS just isn’t enough to capture it all.

Higher framerates make things look more real.

This is fine if what you’re looking at is real, like a football match, but what the likes of The Hobbit showed us, is that what you’re actually looking at Martin Freeman with rubber feet on. And that was just 48fps.

24fps cinema hides all those sins. The budget of the effects department is already massive. It’s not ready to cover all the gaps left by higher framerates.

Even in scenes with few effects the difference can be staggering. I saw a clip from some Will Smith war movie (Gemini Man, I think), and the 120fps mode makes the same scene look like a bunch of guys playing paintball at the local club.

Movies have some blur in their frames. Lots of directors have justified this on the basis of looking more “dreamy”. No matter if you buy that or not, the effect tends to allow lower fps to look like smooth motion to our eyes.

It also helps that they lock in that framerate for the whole movie. Your eyes get used to that. When games suddenly jump from 120fps down to 65fps, you can notice that as stutter. Past a certain point, consistency is better than going higher.

Starfield on PC, btw, is a wildly inconsistent game, even on top tier hardware. Todd can go fuck himself.

30fps in films looks okay because we’re used to that. Early Hollywood had to limit framerates because film wasn’t cheap.

60fps is better for gaming because it allows the game to be more responsive to user input.

This game is not pretty enough to push my 3080 ti as hard as it does. I get around 40fps at max settings.

Do you have a matching high-end CPU, MOBO with a fast FSB, an NVMe drive, and good RAM? Because a PC is only as good as its slowest component.

Then the GPU would be bottlenecked and not run at full power.

That’s my point.

I mean, then the game couldn’t push his 3080 ti to the limits.

What… are you doing in the background? I’ve got a 3070 and 4k monitor, and I get between 50 and 60 FPS with all the settings I can fiddle with

disabledenabled. I use RivaTuner to pipe statistics to a little application that drives my Logitech G19 with a real-time frame graph, CPU usage, memory load and GPU load and it uses multi cores pretty well, and generally makes use of the hardware.– edit – Thanks for pointing out I made totally the wrong comment above, changing the meaning of my comment 180°…

Well he said max settings, not disabled most settings

Thanks, brain fade caused me to write totally the opposite to what I meant.

Well I get that framerate with everything maxed at 3440x1440. I have turned things down to get a higher framerate, but it shouldn’t be struggling. I don’t have anything else running other than the usual programs that stay open.

Then I guess it could be a vram problem? Same res, no res scaling, 3090, no performance problems. Yes, the 90 is a bit faster but not much. But a lot more vram.

I installed an optimized textures mod and instantly improved my performance by like… 20 frames, maybe more.

I have an RX 6600 XT that can run Cyberpunk on high no problem. C’mon Bethesda, the game is really fun, but this is embarrassingly bad optimization.

When there are benchmarks showing 0.1% lows at <40fps at 1080p on a goddamn 4090, no, Todd, the problem is your engine.

Kiss my ass Todd, my 6700xt and Ryzen 5 5600x shouldn’t have to run your game at 1440p native with low settings to get 60fps

Look man I’m not trying to defend Howard here or imply you’re tech illiterate, or that all your issues clear up 100%, but have you by chance updated your driver’s? Mine were out of date enough starfield threw up a warning which I ignored and was not having a good experience with the same as you(iunno your ram or storage config but I was running on an average NVME and 32 gigs ram with 5600x and 6700xt). But after I updated a lot of the issues smoothed out, not all, but most. At 60 fps average with a mix of med high at 1080p though. Maybe worth a try?

I’ve checked for new drivers daily since the game came our, the ones I have are from mid-august though so maybe I’ll double check again

I’m on a 5700xt and game runs around 60 fps at 1080p everything cranked and no FSR or resolution scale applied, so I’d say either your drivers are out of date or something else is wrong there imo

I’ll have to double check, nothing immediately stands out as wrong, the game is on an NVME, I’m running 3600mhz cl14 memory, and I just redid the thermal paste on my CPU. With all that being said, most other games I play get 100+fps, including Forza Horizon 5 with its known memory leak issue and Battlefield 5, so I don’t think anything is wrong with the system

They did lol, and that’s a really dumb question by a tech illiterate. Optimization isn’t a boolean state, it’s a constant ongoing process that still needs more work.

So what’s this about modders immediately being able to improve performance with mods within a week after release?

It’s usually several small things, like stopping the game from reading a 5GB file several times over and over again.

They only meant to say that Bethesda did optimize throughout the development process. You can’t do gamedev without continually optimizing.

That does not mean, Bethesda pushed those optimizations particularly far. And you can definitely say that Bethesda should have done more.Point is, if you ask “Why didn’t you optimize?”, Todd Howard will give you a shit-eating-grin response that they did, and technically he is right with that.

Also optimization happens between a minimum and a maximum. If Bethesda defines that the game should have a certain minimal visual quality (texture and model resolution, render distance, etc), that will lead to the minimum that hardware has to offer to handle it.

What modders so far did, was to lower the minimum further (for example by replacing textures with lower resolutions). That’s a good way to make it run on older hardware, but it’s no optimization Bethesda would or should do, because that’s not what they envisioned for their game.

That they know they won’t do

My entire comment is about why your response doesn’t make sense, they do and it’s not a process that’s ever “done”. It’s whether how optimized is it and if it runs well on targeted specs.

https://www.youtube.com/watch?v=uCGD9dT12C0

Get a new game engine, Todd. Bethesda owns id Software. id Tech is right where.

IdTech isn’t built for open world gameplay

Rage??? Wasn’t Rage open world?

Kind of. It was more smaller locations linked together by loading screens a la Borderlands 2 rather than the typically seamless worlds Bethesda are usually known for. Although you could definitely argue that this was the approach taken by Bethesda for Starfield.

Wasn’t the drivable overworld one big map? I honestly can’t remember now, it’s been so long since I played it.

I do remember them harping on about “megatextures” and what this seemed to mean is that just turning on the spot caused all the textures to have to load back in as they appeared. I dunno if they abandoned that idea or improved it massively, but I don’t remember any other game ever doing that.

My memory could also be being fuzzy. Might have been more like Oblivion and Skyrim.

As for the megatexture thing, it’s not done anymore because it’s not needed. The reason they had to have textures load back in was because the 360/PS3 only had 512MB of total RAM, and while the 360 had shared RAM, the PS3 had two 256MB sticks for the the CPU and GPU respectively. Nowadays even the Xbox 1 is rocking 8GB.

I thought Megatextures were more to avoid the tiled look of many textured landscapes at the time. The idea that the artists can zoom into any point and paint what they need without worrying that it will then appear somewhere else on the map.

Looking around, some people seem to think they were replaced by virtual texturing, but I’ve been out the loop for a long time so haven’t really kept up with what that is. I assume it allows much the same, but far more efficient than a giant texture map. Death Stranding is an example that must use something similar, because as you move about you wear down permanent paths over the landscape.

Right I think I got confused. The megatexture is a huuuuge single texture covering the entire map geometry. It has a ridiculous size (at the time of Rage, it was 32,000 by 32,000). It also holds data for which bits should be treated as what type of terrain for footprints etc.

The problem with this approach is it eats a shit ton of RAM, which the 7th gen consoles didn’t have much of. Thus the only high quality textured that were loaded in were the ones the player could see, and loaded out when the player couldn’t.

Megatextures are used in all IdTech games since, but because they weren’t open world and/or targeted 8th gen consoles and later, with much more RAM, unloading the textures isn’t necessary.

IdTech 7 does not use megatextures, the last engine to use it is IdTech 6

While the frame rate I’ve been getting is not at all consistent, I do get 45-90 fps, which is quite playable with Freesync. Running 3840x1600 w/5800X3D and 6700XT. Not too crazy of a system. From my understanding, it’s the 2000 and 3000 series Nvidia cards mainly having issues.

I had Freesync set to ON on my monitor and it caused a ton of flashing like a strobe light was on. When I turned it off it went away. Any idea what that could have been? I’d like to be able to use it.

I can’t say I’ve seen this issue. What GPU are you using, and are you using the latest drivers? What is the VRR setting in the game set to?

It’s a nvidia rtx 2080 super. Yeah I downloaded the latest drivers. I dont see VRR. I see VRS and I have it on. Also have vsync on.

Yeah I meant VRS. I have VRS on and Freesync enabled on my monitor. I don’t know why you would have problems unless it’s specific to Nvidia, your monitor, or some other software co-morbidity.

I’ll check it out. Thanks for the help

My 2070 super has been running everything smoothly on High at 3440x1440 (CPU is a Ryzen 5600X, game installed on an M.2 SSD). I haven’t been closely monitoring frames, but I cannot think of a single instance where it’s noticeably dropped, which for me usually means 50+ fps for games like this. I may even test running on some higher settings, but I’ve done very little tweaking/testing.

I did, however, install a mod from the very beginning that lets me use DSLL, which likely helps a ton.

That’s great. There are others that are having performance issues though. I hope it gets sorted out for them.

3080 here with zero issues. Running on ultra everything I get 50-60 fps consistently with dips down to 30 once or twice briefly in really busy areas. Also at 2160p resolution too.

Also seems to run just fine so far on my 3080ti, 12700k, though perhaps the lukefz dlss3 mod helps?

DLSS3 is only supported on the 4 series of nvidia GPUs

Because everyone can run out and get a 4x because of Starfield. What a chode lol

Because everyone can run out and get a 4x because of Starfield. What a chode lol

When did I ever suggest anyone “run out. and get a 4x”? Don’t upgrade your GPU (assuming it’s within the specified requirements), wait for patches, drivers, and the inevitable community patch.

M8, I wasn’t talking about you, just the article. Nothing but love for my fellows, here… and even opinions I don’t much like. ;)

I love that someone downvotes this. Ahhh the internet. Whoever was offended by my explanation and thinks it’s not an appropriate post… I hope something good happens to you today.

M8, I wasn’t talking about you, just the article.

Got it. Sorry for my confusion!

Idk what you guys are talking about lol. Runs perfectly with my 4090 13900k 32Go ddr5

Who’s your liquid nitrogen supplier?

Wait? Only 32GB of RAM? And it runs?!

Well it does…

Games are somehow too CPU heavy these days even though they aren’t simulating the entire world like Kenshi, just stuff around you, so even though I upgraded my gpu I can barely get to 30fps. Also had this problem with Wolong, Hogwarts and Wild Hearts.

This is what happens when consoles improve their CPUs.

Suddenly they’ve got more cycles than they know what to do with, so they waste them on frivolous unnoticeable shit. Now you don’t have that extra headroom to get you from console 30fps to PC 60fps+. You’re on a much more even footing than PCs ever were with the underpowered (even at release) PS4 and Xbox One.

You’ll struggle to get a CPU that does double what a PS5 can, and if it’s being held back by a single thread performance (likely), there’s nothing you’ll be able to do to get double that.

I agree, I have an i7-8700k and a 2080super which I’d say are like mid to high level specs and I have a terrible time running Wild Hearts and Starfield. Such a damn shame too as a big MHW and MHR fan I was really looking forward to Wild Hearts and just couldn’t run the game well at all. At this point I’m just not surprised when a triple A game runs like dog water on my system, usually these games are free on gamepass I try them out and 5 minutes later I uninstall.

Indies are where it’s at nowadays.

I wouldn’t consider a 8700k or a 2080 super high level specs or even mid level right now.

Consider that an 8700k is slower than a 13400f today which is considered the absolute lower end of the mid range, realistically 13600k or 13700 is the mid range on the intel side.

To be blunt the 8700k is 5 years old.

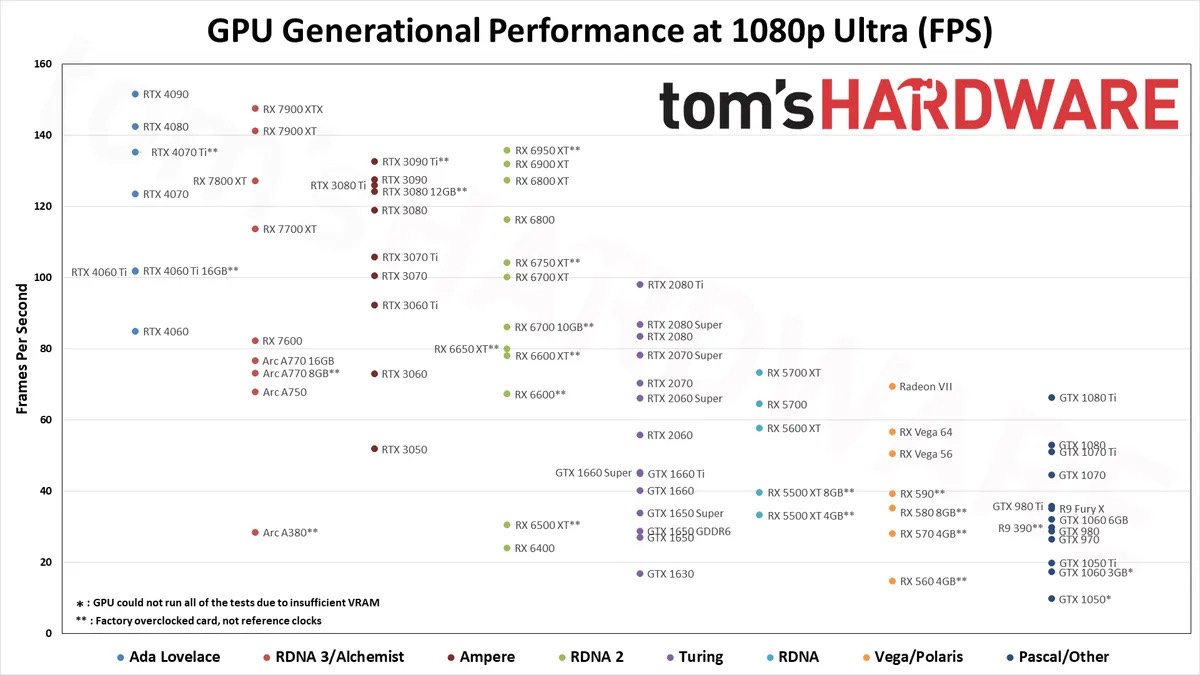

The 2080s is well look at this chart and you make a decision

I think a lot of people are just not appreciative of how out of date their hardware is relative to consoles atm